(Part 8 of the Panzer General Portable Project)

Next on the agenda for Panzer General was the domain modeling task. This is not a trivial undertaking because of the sheer numbers of units, equipment, and terrain that the game supports. Fortunately, resources by Scott Waschlin and Isaac Abraham give a good background and I have done some F# DDD in the past.

I started with a straightforward entity – the nation. PG supports 14 different nations representing two tribes – the allies and the axis. Ignoring the fact that Italy switched sides in 1943, my model looked like this:

type AxisNation =

| Bulgaria

| German

| Hungary

| Italy

| Romania

type AlliedNation =

| France

| Greece

| UnitedStates

| Norway

| Poland

| SovietUnion

| GreatBritian

| Yougaslovia

| OtherAllied

type Nation =

| Allied of AlliedNation

| Axis of AxisNation

| Neutral

So now in the game, whenever I assign units or cities to a side, I have to assign it to a nation. I can’t just say “this unit is an allied unit” and then deal with the consequences (like a null ref) later. F# forces me to assign a nation all of the time – and then guarantees that the nation is assigned later on in the program. This one simple concept eliminates so many potential bugs – and which is why F# is such a powerful language. Also, since I am guaranteed correctness, I don’t need unit tests, which makes my code base much more maintainable.

I also needed a mapping function to interchange NationId (used by the data files of the game) and the Nation type. That was also straightforward:

let getNation nationId =

match nationId with

| 2 -> Allied OtherAllied

| 3 -> Axis Bulgaria

| 7 -> Allied France

| 8 -> Axis German

| 9 -> Allied Greece

| 10 -> Allied UnitedStates

| 11 -> Axis Hungary

| 13 -> Axis Italy

| 15 -> Allied Norway

| 16 -> Allied Poland

| 18 -> Axis Romania

| 20 -> Allied SovietUnion

| 23 -> Allied GreatBritian

| 24 -> Allied Yougaslovia

| _ -> Neutral

Moving on from nation, I went to Equipment. This is a bit more complex. There are different types of equipment: Movable equipment, Flyable Equipment, etc…. Instead of doing the typical OO “is-a” exercise, I started with the attributes for all equipment:

type BaseEquipment = {

Id: int; Nation: Nation;

IconId: int;

Description: string; Cost: int;

YearAvailable:int; MonthAvailable: int;

YearRetired: int; MonthRetired: int;

MaximumSpottingRange: int;

GroundDefensePoints: int;

AirDefensePoints: int

NavalDefensePoints: int

}

Since all units in PG can be attacked, they all need defense points. Also notice that there is a Nation attribute on the equipment – once again F# prevents null refs by lazy programmers (which is me quite often) – you can’t have equipment without a nation.

Once the base equipment is set, I needed to assign attributes to different types of equipment. For example, tanks have motors so therefore have fuel capacity. It makes no sense to have a fuel attribute to a horse-drawn unit, for example. Therefore, I needed a movable and then motorized movable equipment types

type MoveableEquipment = {

MaximumMovementPoints: int;

}

type MotorizedEquipment = {

MoveableEquipment: MoveableEquipment

MaximumFuel: int}

Also, there are different types of motorized equipment for land (that might be tracked, wheeled, half-tracked) as well as sea and air equipment:

type FullTrackEquipment = | FullTrackEquipment of MotorizedEquipment

type HalfTrackEquipment = | HalfTrackEquipment of MotorizedEquipment

type WheeledEquipment = | WheeledEquipment of MotorizedEquipment

type TrackedEquipment =

| FullTrack of FullTrackEquipment

| HalfTrack of HalfTrackEquipment

type LandMotorizedEquipment =

| Tracked of TrackedEquipment

| Wheeled of WheeledEquipment

type SeaMoveableEquipment = {MotorizedEquipment: MotorizedEquipment}

type AirMoveableEquipment = {MoveableEquipment: MoveableEquipment}

With the movement out of the way, some equipment can engage in combat (like a tank) and others cannot (like a transport)

type LandTargetCombatEquipment = {

CombatEquipment: CombatEquipment;

HardAttackPoints: int;

SoftAttackPoints: int;

}

type AirTargetCombatEquipment = {

CombatEquipment: CombatEquipment;

AirAttackPoints: int;

}

type NavalTargetCombatEquipment = {

CombatEquipment: CombatEquipment;

NavalAttackPoints: int

}

With movement and combat accounted for, I could start building the types of equipment

type InfantryEquipment = {

BaseEquipment: BaseEquipment;

EntrenchableEquipment: EntrenchableEquipment;

MoveableEquipment: MoveableEquipment;

LandTargetCombatEquipment: LandTargetCombatEquipment

}

type TankEquipment = {

BaseEquipment: BaseEquipment;

FullTrackedEquipment: FullTrackEquipment;

LandTargetCombatEquipment: LandTargetCombatEquipment

}

There are twenty two different equipment types – you can see them all in the github repsository here.

With the equipment out of the way, I was ready to start creating units – unit have a few stats like name and strength, as well as how much ammo and experience they have if they are a combat unit

type UnitStats = {

Id: int;

Name: string;

Strength: int;

}

type ReinforcementType =

| Core

| Auxiliary

type CombatStats = {

Ammo: int;

Experience:int;

ReinforcementType:ReinforcementType }

type MotorizedMovementStats = {

Fuel: int;}

With these basic attributes accounted for, I could then make units of the different equipment types. For example:

type InfantryUnit = {UnitStats: UnitStats; CombatStats: CombatStats; Equipment: InfantryEquipment;

CanBridge: bool; CanParaDrop: bool}

type TankUnit = {UnitStats: UnitStats; CombatStats: CombatStats; MotorizedMovementStats:MotorizedMovementStats;

Equipment: TankEquipment}

PG also has different kinds of infantry units like this:

type Infantry =

| Basic of InfantryUnit

| HeavyWeapon of InfantryUnit

| Engineer of InfantryUnit

| Airborne of InfantryUnit

| Ranger of InfantryUnit

| Bridging of InfantryUnit

and then all of the land units can be defined as:

type LandCombat =

| Infantry of Infantry

| Tank of TankUnit

| Recon of ReconUnit

| TankDestroyer of TankDestroyerUnit

| AntiAir of AntiAirUnit

| Emplacement of Emplacement

| AirDefense of AirDefense

| AntiTank of AntiTank

| Artillery of Artillery

There are a bunch more for sea and air, you can see on the github repository. Once they are all defined, they can be brought together like so:

type Transport =

| Land of LandTransportUnit

| Air of AirTransportUnit

| Naval of NavalTransport

type Unit =

| Combat of Combat

| Transport of Transport

It is interesting to compare this domain model to the C# implementation I created six years ago. They key difference that stick out to me is to take properties of classes and turn them into types. So instead of a Fuel property of a unit that may or may not be null, there are MotorizedUnit types that require a fuel level. Instead of a bool field of like CanAttack or an interface like IAttackable, the behavor is baked into the type

Also, the number of files and code dropped significantly, which definitely improved the code base:

It is not all fun and games though, because I still need a mapping function to take the data files from the game and map them, to the types

as well as functions to pull actionable data out of the type like this:

let getMoveableEquipment unit =

match unit with

| Unit.Combat c ->

match c with

| Combat.Air ac ->

match ac with

| AirCombat.Fighter acf ->

match acf with

| Fighter.Prop acfp -> Some acfp.Equipment.MotorizedEquipment.MoveableEquipment

| Fighter.Jet acfj -> Some acfj.Equipment.MotorizedEquipment.MoveableEquipment

| AirCombat.Bomber acb ->

match acb with

| Bomber.Tactical acbt -> Some acbt.Equipment.MotorizedEquipment.MoveableEquipment

| Bomber.Strategic acbs -> Some acbs.Equipment.MotorizedEquipment.MoveableEquipment

| Combat.Land lc ->

match lc with

So far, that trade-off seems worth it because I just have to write these supporting functions once and I get guaranteed correctness across the entire code base – without hopes, prayers, and unit tests….

Once I had the units set up, I followed a similar exercise for Terrain. The real fun for me came to the next module – the series of functions to calculate movement of a unit across terrain. Each tile has a movement cost that is calculated based on the kind of equipment and the condition of a tile (tanks move slower over muddy ground)

let getMovmentCost (movementTypeId:int) (tarrainConditionId:int)

(terrainTypeId:int) (mcs: MovementCostContext.MovementCost array) =

mcs |> Array.tryFind(fun mc -> mc.MovementTypeId = movementTypeId &&

mc.TerrainConditionId = tarrainConditionId &&

mc.TerrainTypeId = terrainTypeId)

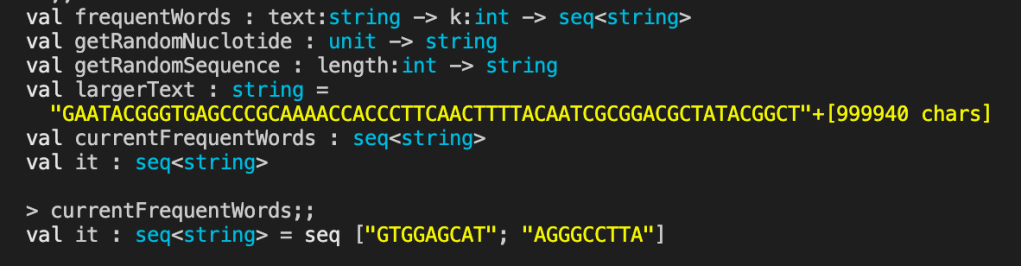

I need the ability to calculate all possible moveable tiles for a given unit. There are some supporting functions that you can review in the repository and the final calculator I am very happy with

let getMovableTiles (board: Tile array) (landCondition: LandCondition) (tile:Tile) (unit:Unit) =

let baseTile = getBaseTile tile

let maximumDistance = (getUnitMovementPoints unit) – 1

let accumulator = Array.zeroCreate<Tile option> 0

let adjacentTiles = getExtendedAdjacentTiles accumulator board tile 0 maximumDistance

adjacentTiles

|> Array.filter(fun t -> t.IsSome)

|> Array.map(fun t -> t.Value)

|> Array.map(fun t -> t, getBaseTile t)

|> Array.filter(fun (t,bt) -> bt.EarthUnit.IsNone)

|> Array.filter(fun (t,bt) -> canLandUnitsEnter(bt.Terrain))

|> Array.map(fun (t,bt) -> t)

and the results show:

With the domain set up, I can then concentrate on the game play